Original Link: SSAS: Currency Conversion (Many-to-Many)

This article is a complement of the book "SSAS Step by Step 2005". In this book, Reed Jacobson and Stacia Misner gave us some guidelines and generic directions about how to apply currency conversion based on many-to-many relationships, but they did not provide a step-by-step case. In order to make it complete, I will try to describe how I work on it, and I hope you can join in the discussion.

First of all, let's suppose that you have finished the exercises described before the part of "Supporting Currency Conversion". So that we can do the work based on a qualified "SSAS" cube.

1. Go to DSV, right click DSV pane, click ADD/Remove tables, add FactCurrencyRate and DimCurrency into DSV and click "OK"

2. Right-click "Diagram Organizer" to create a new diagram, and add DimCurrency, FactCurrencyRate, FactInternetSales and DimTime into it.

3. Go to "Cube Structure", right-click "Measures" to add a new Measure Group "Fact Currency Rate"

4. After we add the measure group "Fact Currency Rate", change the property "Type" of it to "ExchangeRate", expend it then

4.1 Right-click the measure, "Average Rate", to show its properties, change AggregateFuction to "AverageOfChildren"

4.2 Right-click the measure, "End of Day-Date", to show its properties, and change AggregateFuction to "LastNonEmpty". Save the project.

5. In Solution Explorer, right-click the folder "Dimensions" to create a new dimension using Dimension Wizard.

6. Make sure "Build the dimension using a data source" is chosen and the check box of Auto Build is clear, click Next

7.Available data source view should be "SSAS Step by Step DW" and click Next.

8.Dimension Type should be "Standard dimension"

9.Choose dbo.DimCurrency in the step of "Select the Main Dimension Table", key column should be "CurrentKey", member name can be "CurrencyName", click Next.

10. At the step "Select Dimension Attributes", make the setting like following, and click Next.

11. At the step "Specify Dimension Type", set Currency ISO Code to Currency Alternate Key, set Currency Source to Dim Currency, which is in fact CurrencyKey. Click Next.

For Currency ISO Code, please refer to:

http://www.iso.org/iso/support/currency_codes_list-1.htm

12. At the step "Define Parent-Child Relationship", click Next.

13. At the step "Completing the Wizard", name the dimension as "Currency". Click Finish.

14. Double click "Currency.dim" in Solution Explorer, rename the attribute "Dim Currency" to "Currency" and "Currency Alternative Key" to "Currency ISO Code"

15. Go to Cube Structure, and add Currency in the dimensions, then deploy the project. Save the project.

16. Right-click SSAS.cube to run Business Intelligence Wizard. Choose "Define currency conversion" and click Next (before we start to use BI Wizard, we should deploy the project first).

17. At the step "Set Currency Conversion Options", choose "Fact Currency Rate" and set other options like the following, then click Next.

18. Since we just simply use currency conversion to apply an exchange rate to measures, at the step "Select Members", we will only check Reseller Sales Amount and Internet Sales Amount. Click Next.

19. Select "Many-to-Many" as Conversion Type, then Next.

20. The step "Define Local Currency Reference" will be like the following, usually we do not need to change anything, so we just click Next.

http://msdn.microsoft.com/en-us/library/ms175660.aspx

Local currency:

The currency used to store transactions on which measures to be converted are based.

The local currency can be identified by either:

A currency identifier in the fact table stored with the transaction, as is commonly the case with banking applications where the transaction itself identifies the currency used for that transaction.

A currency identifier associated with an attribute in a dimension table that is then associated with a transaction in the fact table, as is commonly the case in financial applications where a location or other identifier, such as a subsidiary, identifies the currency used for an associated transaction.

21. At the step "Specify Reporting Currencies", select all reporting currencies, click Next.

22. At the step "Completing the Wizard", notice that BI Wizard will generate script about currency conversion in the script of the cube. This part gives us an idea, that is if we find there is something wrong with the result generated by BI Wizard, in order to roll back the state before we run BI Wizard, we can go the script of the cube, find the script of currency conversion and delete it, then deploy the project to roll back.

Click Finish and save the project.

23. Go to DSV, we can find a named query "Reporting Currency", and set CurrencyKey as Logical Primary Key. Relate "Reporting Currency" to "FactInternetSales" and "FactResellerSales" by dragging CurrencyKey to them.

24. Go to Dimension Usage. Click the intersection of Reporting Currency and Fact Currency Rate, set a regular relationship between them as follows.

25. Click the intersection of Reporting Currency and Internet Sales, set a many-to-many relationship between them as follows, then do the same thing to Reseller Sales. Save the project.

26. Deploy the project. We may run into the following issue. It tells us that there is something wrong with the named query, Reporting Currency.

-----------------------------------------------------------------------------------------

Error 1 Dimension 'Reporting Currency' > Attribute 'Currency' : The 'Integer' data type is not allowed for the 'NameColumn' property; 'WChar' should be used. 0 0

-----------------------------------------------------------------------------------------

27. Double click "Reporting Currency.dim" in Solution Explorer, and check the properties of the attribute "Currency" of Reporting Currency. We can find BI Wizard incorrectly set the data type of NameColumn to Integer. We need to reset it to WChar. Then deploy it again.

27. The deployment run into another issue which is saying "Conversion failed when converting the varchar value 'Afghani' to data type int " as follows.

28. In order to solve it, go to DSV, right click Reporting Currency to edit the named query

29. We can notice that the query tries to union 2147483647 which is an integer and CurrencyName which is varchar together. This might be a reason to explain the error.

So how about we update the second part of the union as follows:

---------------------------------------------------------------------

SELECT DISTINCT NULL AS [Local 0], Null AS Local, NULL AS [Local 2]

FROM DimCurrency

---------------------------------------------------------------------

When we deploy it again, it runs into another issue. It seems there should be a member called [Local] in Reporting Currency.dim

So let's edit the name query again and this time we will update the second part of the union as follows and deploy the cube.:

---------------------------------------------------------------------

SELECT DISTINCT NULL AS [Local 0], 'Local' AS Local, NULL AS [Local 2]

FROM DimCurrency

---------------------------------------------------------------------

Deployment Completed Successfully!!!

30. Drag Currency from Reporting Currency, Average Rate from Fact Currency Rate and Reseller Sales Amount and Internet Sales Amount in Cube Browser, we can get the final result!

31. Properties of the attribute of Reporting Currency/Currency (To be continued)

Because the attributes, "Currency" and "Currency ISO Code" are not groupable attributes, we should set the property "AttributeHierarchyEnabled" to False, but we need to set "IsAggregatable" to True. I will also talk about this later

Google Track

Friday, November 16, 2012

SSAS: Currency Conversion (Many-to-Many)

Labels:

conversion,

currency,

dimension,

dsv,

euro,

implement,

many to many,

many-to-many,

mdx,

nok,

OLAP,

ssas,

Time,

usd,

valuta

Monday, October 8, 2012

Thursday, October 4, 2012

How Much Data is Created Every Minute?

Posted by Neil Spencer

The Internet has become a place where massive amounts of information and data are being generated every day. Big data isn’t just some abstract concept created by the IT crowd, but a continually growing stream of digital activity pulsating through cables and airwaves across the world. This data never sleeps: every minute giant amounts of it are being generated from every phone, website and application across the Internet. The question: how much is created, and where does it all come from?

To put things into perspective, this infographic by DOMO breaks down the amount of data generated on the Internet every minute. YouTube users upload 48 hours of video, Facebook users share 684,478 pieces of content, Instagram users share 3,600 new photos, and Tumblr sees 27,778 new posts published. These are sites many people around the world use on a regular basis, and will continue to use in the future. The global Internet population now represents 2.1 billion people, and with every website browsed, status shared, and photo uploaded, we leave a digital trail that continually grows the hulking mass of big data. See where else big data is coming from in the infographic below

The Internet has become a place where massive amounts of information and data are being generated every day. Big data isn’t just some abstract concept created by the IT crowd, but a continually growing stream of digital activity pulsating through cables and airwaves across the world. This data never sleeps: every minute giant amounts of it are being generated from every phone, website and application across the Internet. The question: how much is created, and where does it all come from?

To put things into perspective, this infographic by DOMO breaks down the amount of data generated on the Internet every minute. YouTube users upload 48 hours of video, Facebook users share 684,478 pieces of content, Instagram users share 3,600 new photos, and Tumblr sees 27,778 new posts published. These are sites many people around the world use on a regular basis, and will continue to use in the future. The global Internet population now represents 2.1 billion people, and with every website browsed, status shared, and photo uploaded, we leave a digital trail that continually grows the hulking mass of big data. See where else big data is coming from in the infographic below

Sunday, September 30, 2012

IT Training By Experts

At Technitrain we believe the best way to learn a technology is to learn from an expert. Our courses are taught by top consultants who have a wealth of hands-on experience to share and who can answer all of your difficult questions. You'll acquire the practical skills you need to do your job as well as learn the tips and tricks that only the experts know.

Here is the link to more information.

Our trainers are the best in their field: Microsoft MVPs, authors and well-known bloggers such as Chris Webb, Gavin Payne, Christian Bolton.

Here is the link to more information.

Our trainers are the best in their field: Microsoft MVPs, authors and well-known bloggers such as Chris Webb, Gavin Payne, Christian Bolton.

Labels:

70-448,

Business intelligence,

course,

exam,

guarantee,

learn,

mcts,

mdx,

microsoft,

money back,

ms sql,

pass,

sql

Friday, September 28, 2012

The Big Data Fairy Tale

By Roel Castelein

Fairy tales usually start with ‘Once upon a time ...' and end with ‘... And they lived long and happily ever after'. But nobody explains ‘how' the heroes live long and happily ever after. Big data (analytics) promise to transform your business, but just as in fairy tale endings, big data will not explain ‘how' to transform your organization. In my view, big data might spark some behavioral change or open people's minds, but it will not transform organizations. At best, big data evolves organizations. Let's look at the concept and a concrete example to draw conclusions.

What big data analytics does is take a bunch of data, analyze and visualize it, and then derive insights that potentially can improve your organization or business. Based on these insights the actual transformation can begin, but it requires more than just big data. Let's have a look at a classic example of data analytics; the reduction of crime in New York under Mayor Giuliani with the help of CompStat.

CompStat is a data system that maps crime geographically and in terms of emerging criminal patterns, as well as charting officer performance by quantifying criminal apprehensions. The key to success was not the data or analysis, but that the organizational management that used the data and analysis was effective. Processes, structures and accountability were setup to drive the transformation. In weekly meetings, NYPD executives met with local precinct commanders from the eight boroughs in New York to discuss the problems. They devised strategies and tactics to solve problems, reduce crime, and ultimately improve quality of life in their assigned area. CompStat tracked the results of these strategies and tactics, and whether they were successful or not. Precinct commanders were held accountable for the results.

Drawing upon my own experience, I know how difficult an organizational transformation is. Even if you have the data and the analysis that shows things need to change, it requires much more than data analysis. Let's assume that the data uncovers opportunities for improvement, either in reducing cost or in increasing revenue. The next step is to design the changes in processes, in people's roles, in org charts and in the systems. This usually entails a two pronged approach; communicate the change in org charts, processes and roles, and engrain these changes in the systems to track the change results. This tracking creates a feedback loop, necessary to manage the transformation.

Another challenge in the big data transformation message is finding the right people. Ideally the team leading the transformation needs to understand an organization's data, enriched with outside data, then know how to do data analysis, and once the results are there, strategically communicate the change to get everybody on board. Next, the transformation team needs to set up a tracking and feedback process that holds participants accountable for the transformation results. And when participants do not play along, have an escalation process in place, with the possibility for punitive measures.

In the same way that Giuliani fired one of the precinct commanders when he showed up drunk at the first CompStat meeting, big data systems require a complementary management philosophy to ensure whatever transformational insights are derived get implemented and controlled.

So, when the advertisements claim that big data will transform your business, remember that big data brings the potential for transformation, not the actual transformation. That still requires commitment and hard work, just like ‘living long and happily ever after'. That's why they are called fairy tales.

Friday, September 21, 2012

How Big Data Brings BI and Predictive Analytics Together

Big data is breathing new life into business intelligence by putting the power of prediction into the hands of everyday decision-makers.

For as long as anyone can remember, the world of predictive analytics has been the exclusive realm of ivory-tower statisticians and data scientists who sit far away from the everyday line of business decision maker. Big data is about to change that.

As more data streams come online and are integrated into existing BI, CRM, ERP and other mission-critical business systems, the ever-elusive (and oh so profitable) single view of the customer may finally come into focus. While most customer service and field sales representatives have yet to feel the impact, companies such as IBM and MicroStrategy are working to see that they do soon.

Big Data Moves Analytics Beyond Pencil-Pushers

Imagine a world in which a CSR sitting at her console can make an independent decision on whether a problem customer is worth keeping or upgrading. Imagine, too, that a field salesman can change a retailer's wine rack on the fly based on the preferences that partiers attending the jazz festival next weekend have contributed on Facebook and Twitter.

Big data is pushing a tool more commonly used for cohort and regression analysis into the hands of line-level managers, who can then use non-transactional data to make strategic, long-term business decisions about, for example, what to put on store shelves and when to put it there.

However, big data is not about to supplant traditional BI tools, says Rita Sallam, Gartner's BI analyst. If anything, big data will make BI more valuable and useful to the business. "We're always going to need to look at the past…and when you have big data, you are going to need to do that even more. BI doesn't go away. It gets enhanced by big data."

How else you will know if what you are seeing in the initial phases of discovery will indeed bear out over time. For example, do red purses really sell better than blue ones in the Midwest? An initial pass through the data may suggest so—more red purses sold last quarter than ever before, therefore, red purses sell better.

But this is a correlation, not a cause. If you look more closely, using historical transaction data gleaned from your BI tools, you may find, say, that it is actually your latest merchandise-positioning-campaign that's paying dividends because the retailers are now putting red purses at eye level.

That's why IBM's Director of Emerging Technologies, David Barnes, is actually more inclined to refer to the resulting output from big data technologies such as Hadoop, map/reduce and R as "insights." You wouldn't want to make mission-critical business decisions based on sentiment analysis of a Twitter stream, for example.

For as long as anyone can remember, the world of predictive analytics has been the exclusive realm of ivory-tower statisticians and data scientists who sit far away from the everyday line of business decision maker. Big data is about to change that.

As more data streams come online and are integrated into existing BI, CRM, ERP and other mission-critical business systems, the ever-elusive (and oh so profitable) single view of the customer may finally come into focus. While most customer service and field sales representatives have yet to feel the impact, companies such as IBM and MicroStrategy are working to see that they do soon.

Big Data Moves Analytics Beyond Pencil-Pushers

Imagine a world in which a CSR sitting at her console can make an independent decision on whether a problem customer is worth keeping or upgrading. Imagine, too, that a field salesman can change a retailer's wine rack on the fly based on the preferences that partiers attending the jazz festival next weekend have contributed on Facebook and Twitter.

Big data is pushing a tool more commonly used for cohort and regression analysis into the hands of line-level managers, who can then use non-transactional data to make strategic, long-term business decisions about, for example, what to put on store shelves and when to put it there.

However, big data is not about to supplant traditional BI tools, says Rita Sallam, Gartner's BI analyst. If anything, big data will make BI more valuable and useful to the business. "We're always going to need to look at the past…and when you have big data, you are going to need to do that even more. BI doesn't go away. It gets enhanced by big data."

How else you will know if what you are seeing in the initial phases of discovery will indeed bear out over time. For example, do red purses really sell better than blue ones in the Midwest? An initial pass through the data may suggest so—more red purses sold last quarter than ever before, therefore, red purses sell better.

But this is a correlation, not a cause. If you look more closely, using historical transaction data gleaned from your BI tools, you may find, say, that it is actually your latest merchandise-positioning-campaign that's paying dividends because the retailers are now putting red purses at eye level.

That's why IBM's Director of Emerging Technologies, David Barnes, is actually more inclined to refer to the resulting output from big data technologies such as Hadoop, map/reduce and R as "insights." You wouldn't want to make mission-critical business decisions based on sentiment analysis of a Twitter stream, for example.

Labels:

analyse,

bi,

Biga,

bring,

business,

cognos,

data,

data analytics,

Hadoop,

ibm,

Informatica,

intelligence,

jaspersoft,

microsoft,

Predictive,

sap,

together

Thursday, September 6, 2012

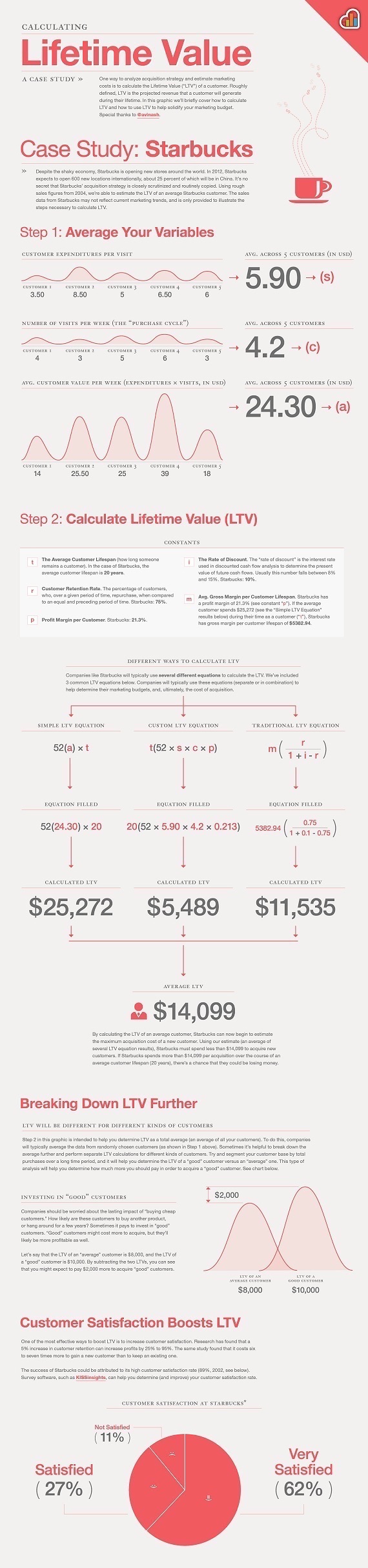

Infographic explains Lifetime Value of a Customer

Tuesday, August 28, 2012

Nordic Choice Business Intelligence

Here is a video where shows the job that me and BI collegues from Nordic Choice Hotels have done last 2 years.

Also we collaborated with Platon (www.platon.net) in UX and Dashboard Designing of our Business Intelligence Solution.

A Visionary Choice - Nordic Choice Hotels Business Intelligence vision from Platon on Vimeo.

Also we collaborated with Platon (www.platon.net) in UX and Dashboard Designing of our Business Intelligence Solution.

A Visionary Choice - Nordic Choice Hotels Business Intelligence vision from Platon on Vimeo.

Labels:

analytics,

business,

company,

data,

hotels,

intelligence,

models,

Nordic Choice,

OLAP,

petter,

Predictive,

solution,

stordalen,

technology,

Visionary,

work

Data Analytics see the World differently

Labels:

analysis,

analytics,

Big data,

bsuiness,

conference,

developers,

inspiring,

intelligence,

professionals,

see,

teradata,

tutorials,

video,

world

Thursday, August 23, 2012

A BI Architectures Approach to Modeling and Evolving with Analytic Databases

Major shifts converging in today's BI environment bring the opportunity to discover new answers to old questions about what BI architectures are about and how they are designed.

August 21, 2012

By John O'Brien, Principal, Radiant Advisors

Whether you have been a BI architect managing a production data warehouse for many years or are embarking on building a new data warehouse, the new analytic technologies coming out today have never been so powerful and complex to understand. In fact, with so many analytic technologies available on the market, they are somewhat overwhelming as we struggle to make sense of what to do with them and which ones to use with our existing environments.

This is good for BI architects because it brings us back to BI architecture fundamentals, key data management principles, pattern recognition, and agile processes, along with BI capabilities that challenge classic BI architecture best practices to design what clearly makes sense to meet the demands of business today.

There are major shifts converging in today's BI environment, and these changes bring with them the opportunity to discover new answers to old questions about what a BI architecture is all about and how an architecture is designed. As I explore these questions in this article, I will focus on three main themes: BI architectures are strategic platforms that evolve to their full potential; good architectures are based on recognizing data management principles (patterns and so-called best practices are discovered later); and BI architecture design is purposeful at every stage of development and technology decisions follow this purpose.

Architecture Maturity and Information Capabilities

We have all seen the research that says BI architectures evolve into robust information platforms and value over time. BI teams have focused on increasing value through maturing their data warehouses from operational reporting to data marts to data warehouses and finally to enterprise data warehouses. This evolutionary approach is typical when balancing pressing tactical needs for information delivery with strategic development, and is found in many companies where business demands for information drive towards a data warehouse platform.

The classic data warehouse is the last thing to be built, if ever, because the emphasis remains on quicker delivery first and information consistency later. Unfortunately, this leads to data warehouses that reflect current information needs and doesn't foster the evolution of a mature analytics culture.

Instead, an architecture based on BI capabilities focuses on nurturing the analytic culture of the business community by first educating user communities about the BI capabilities available and then on business subject data that is delivered via BI capabilities. This approach centers the data warehouse architecture on BI capabilities such as information delivery; reporting and parameterized reporting; dimensional analytics for goals achievement; and advanced analytics for gaining insights, to name a few. These discussions recognize that the same consistent data has many usage patterns, behaviors, and roles in the decision process. A BI architecture that is designed in this way ensures that data models and chosen analytic technologies are best suited to their intended purpose.

However, this BI-capabilities approach is contrary to some BI architects' belief that there should be an all-in-one data warehouse platform in the enterprise.

Thursday, July 26, 2012

PFI - Personal Finance Intelligence

This research work will be published in IJSER (International Journal of Sceince and Engineering Research), 8 August Edition 2012. Part of the research paper wil be posted here:

ELegant Analytics - ELA presents

Personal Finance Intelligence

(First part)

Introduction

Medical doctors always say that the best medicine is the preventive one and research shows that humans work very little on this direction. The subject of this research is not Medicine, but not less important, Economy.

Financial crisis happened before, is happening now and will happen in future if we do not create a preventive plan. One of the solutions that aim to be part of a “preventive medicine” for financial crises is produced in the data laboratories of ELA (ELegant Analytics) and its name is PFI.

What is PFI?

Personal Finance Intelligence (PFI) represents the name of the Business Intelligence solution for personal finance and planning of your budget. Inspired by the TV Show “Luksusfellen” (A show broadcasted in Norway about people struggling with their economy and living luxurious live-“Luxurious buddy”.), this Business Intelligence approach may be a solution for all these who fail to maintain well their own economy, for those who want to perform their economy and last but not least the bank itself.

The purpose of this project is to create a Customer Analytical Cube that would process data for each bank costumer using his/her history for its own benefit and then queries back with the most important answers that customers and the bank itself need.

This solution will include also benchmarking against an Imaginary subject that can be Min, Max or Avg of the customer’s values in a set that can be certain region, for a period of time, age group, sex, income ranges etc.

For having more control and planning your own economy, targeting will be an option when users (bank customers) can put their targets for costs or income a month, a quarter or a year ahead and always will be warned when they are about to achieve the costs amount targeted.

Project is also meant to be use for the bank itself in cases when they want to evaluate a customer and his/her behave regarding his/her finance stability, because today Credit scoring system lack for some important data that can make decision more accurate. The customer behave will be same important for the bank, so the bank will know what type of customer is and how he/she handle his/her economy.

Focus and goals

The main focus of this project is the customer, his/her history and his/her behave.

Our first goal is to make possible data collection for customers in the smallest transaction granularity as possible by not impersonating data. This way, bank operates with the whole data diving into details secure and lawfully. Our second goal is by doing Business Intelligence with his historical data to give alerts and advices where he/she is performing bad or giving support where he/she is doing well.

Our third goal is to see where the customer stands, comparing with the region where he/she lives, comparing with his age group, sex and income. This is going to help him improve savings and cut costs by showing how people around him can do with same budget.

Our fourth, but not less important goal is related to the bank itself, where the bank can have clear financial picture for its customer and can decide much better than credit scoring system.

This system lowers the risk and improves the loyalty with customers.

Project Highlights:

Data center that has the capacity to

• Data transfer once a day or live-data (for the bank side)

• Centralized Customer Intelligence for the Entire Bank

• Live Data transfer and access

• Separate service for each client

• Client vs. Average, Max or Min of a set of clients (Benchmarking)

• Other Intelligence analysis (Geography, age, sex etc…)

Processes on the fly (Administration and Maintenance):

• Optimizing ETL

• Optimizing DB and DWH

• Optimizing Indexing and Data Volume

• Query Performance regarding MDX calculated measures

Project Closure Recommendations

It is very recommended that privacy and security issues regarding credential information about customers to be distributed in a high consideration.

Also users’ impersonation with data source and data reported is highly recommended to be solved in the best possible way, including data source security till Cube role group’s security.

Other recommendation is regarding planning the data volume and performance upon queries requests in a large volume of data in production environment.

Data Architecture of the PFI Solution

Database is the main source of data and is usually an accumulator of data entry and daily transactions (manually or automatized). In our case, this Customer DB is the main data source for bank costumer data and for the solution itself. Usually is made by tables that have different information and transaction data. The problem with it is that DB has raw data and is not organized, calibrated and optimized for the need of business. So, from the database we create or transform a Data warehouse.

Database is the main source of data and is usually an accumulator of data entry and daily transactions (manually or automatized). In our case, this Customer DB is the main data source for bank costumer data and for the solution itself. Usually is made by tables that have different information and transaction data. The problem with it is that DB has raw data and is not organized, calibrated and optimized for the need of business. So, from the database we create or transform a Data warehouse.

* If you want to download the full research just click here.

ELegant Analytics - ELA presents

Personal Finance Intelligence

(First part)

Introduction

Medical doctors always say that the best medicine is the preventive one and research shows that humans work very little on this direction. The subject of this research is not Medicine, but not less important, Economy.

Financial crisis happened before, is happening now and will happen in future if we do not create a preventive plan. One of the solutions that aim to be part of a “preventive medicine” for financial crises is produced in the data laboratories of ELA (ELegant Analytics) and its name is PFI.

What is PFI?

Personal Finance Intelligence (PFI) represents the name of the Business Intelligence solution for personal finance and planning of your budget. Inspired by the TV Show “Luksusfellen” (A show broadcasted in Norway about people struggling with their economy and living luxurious live-“Luxurious buddy”.), this Business Intelligence approach may be a solution for all these who fail to maintain well their own economy, for those who want to perform their economy and last but not least the bank itself.

The purpose of this project is to create a Customer Analytical Cube that would process data for each bank costumer using his/her history for its own benefit and then queries back with the most important answers that customers and the bank itself need.

This solution will include also benchmarking against an Imaginary subject that can be Min, Max or Avg of the customer’s values in a set that can be certain region, for a period of time, age group, sex, income ranges etc.

For having more control and planning your own economy, targeting will be an option when users (bank customers) can put their targets for costs or income a month, a quarter or a year ahead and always will be warned when they are about to achieve the costs amount targeted.

Project is also meant to be use for the bank itself in cases when they want to evaluate a customer and his/her behave regarding his/her finance stability, because today Credit scoring system lack for some important data that can make decision more accurate. The customer behave will be same important for the bank, so the bank will know what type of customer is and how he/she handle his/her economy.

Focus and goals

The main focus of this project is the customer, his/her history and his/her behave.

Our first goal is to make possible data collection for customers in the smallest transaction granularity as possible by not impersonating data. This way, bank operates with the whole data diving into details secure and lawfully. Our second goal is by doing Business Intelligence with his historical data to give alerts and advices where he/she is performing bad or giving support where he/she is doing well.

Our third goal is to see where the customer stands, comparing with the region where he/she lives, comparing with his age group, sex and income. This is going to help him improve savings and cut costs by showing how people around him can do with same budget.

Our fourth, but not less important goal is related to the bank itself, where the bank can have clear financial picture for its customer and can decide much better than credit scoring system.

This system lowers the risk and improves the loyalty with customers.

Project Highlights:

Data center that has the capacity to

• Data transfer once a day or live-data (for the bank side)

• Centralized Customer Intelligence for the Entire Bank

• Live Data transfer and access

• Separate service for each client

• Client vs. Average, Max or Min of a set of clients (Benchmarking)

• Other Intelligence analysis (Geography, age, sex etc…)

Processes on the fly (Administration and Maintenance):

• Optimizing ETL

• Optimizing DB and DWH

• Optimizing Indexing and Data Volume

• Query Performance regarding MDX calculated measures

Project Closure Recommendations

It is very recommended that privacy and security issues regarding credential information about customers to be distributed in a high consideration.

Also users’ impersonation with data source and data reported is highly recommended to be solved in the best possible way, including data source security till Cube role group’s security.

Other recommendation is regarding planning the data volume and performance upon queries requests in a large volume of data in production environment.

Data Architecture of the PFI Solution

Database is the main source of data and is usually an accumulator of data entry and daily transactions (manually or automatized). In our case, this Customer DB is the main data source for bank costumer data and for the solution itself. Usually is made by tables that have different information and transaction data. The problem with it is that DB has raw data and is not organized, calibrated and optimized for the need of business. So, from the database we create or transform a Data warehouse.

Database is the main source of data and is usually an accumulator of data entry and daily transactions (manually or automatized). In our case, this Customer DB is the main data source for bank costumer data and for the solution itself. Usually is made by tables that have different information and transaction data. The problem with it is that DB has raw data and is not organized, calibrated and optimized for the need of business. So, from the database we create or transform a Data warehouse.* If you want to download the full research just click here.

Tuesday, July 24, 2012

The Future of Decision Making: Less Intuition, More Evidence

A fantastic post by Andrew McAfee

Human intuition can be astonishingly good, especially after it's improved by experience. Savvy poker players are so good at reading their opponents' cards and bluffs that they seem to have x-ray vision. Firefighters can, under extreme duress, anticipate how flames will spread through a building. And nurses in neonatal ICUs can tell if a baby has a dangerous infection even before blood test results come back from the lab.

The lexicon to describe this phenomenon is mostly mystical in nature. Poker players have a sixth sense; firefighters feel the blaze's intentions; Nurses just know what seems like an infection. They can't even tell us what data and cues they use to make their excellent judgments; their intuition springs from a deep place that can't be easily examined. . Examples like these give many people the impression that human intuition is generally reliable, and that we should rely more on the decisions and predictions that come to us in the blink of an eye.

This is deeply misguided advice. We should rely less, not more, on intuition.

A huge body of research has clarified much about how intuition works, and how it doesn't. Here's some of what we've learned:

•It takes a long time to build good intuition. Chess players, for example, need 10 years of dedicated study and competition to assemble a sufficient mental repertoire of board patterns.

•Intuition only works well in specific environments, ones that provide a person with good cues and rapid feedback . Cues are accurate indications about what's going to happen next. They exist in poker and firefighting, but not in, say, stock markets. Despite what chartists think, it's impossible to build good intuition about future market moves because no publicly available information provides good cues about later stock movements. Feedback from the environment is information about what worked and what didn't. It exists in neonatal ICUs because babies stay there for a while. It's hard, though, to build medical intuition about conditions that change after the patient has left the care environment, since there's no feedback loop.

•We apply intuition inconsistently. Even experts are inconsistent. One study determined what criteria clinical psychologists used to diagnose their patients, and then created simple models based on these criteria. Then, the researchers presented the doctors with new patients to diagnose and also diagnosed those new patients with their models. The models did a better job diagnosing the new cases than did the humans whose knowledge was used to build them. The best explanation for this is that people applied what they knew inconsistently — their intuition varied. Models, though, don't have intuition.

•It's easy to make bad judgments quickly. We have a many biases that lead us astray when making assessments. Here's just one example. If I ask a group of people "Is the average price of German cars more or less than $100,000?" and then ask them to estimate the average price of German cars, they'll "anchor" around BMWs and other high-end makes when estimating. If I ask a parallel group the same two questions but say "more or less than $30,000" instead, they'll anchor around VWs and give a much lower estimate. How much lower? About $35,000 on average, or half the difference in the two anchor prices. How information is presented affects what we think.

•We can't know tell where our ideas come from. There's no way for even an experienced person to know if a spontaneous idea is the result of legitimate expert intuition or of a pernicious bias. In other words, we have lousy intuition about our intuition.

My conclusion from all of this research and much more I've looked at is that intuition is similar to what I think of Tom Cruise's acting ability: real, but vastly overrated and deployed far too often.

So can we do better? Do we have an alternative to relying on human intuition, especially in complicated situations where there are a lot of factors at play? Sure. We have a large toolkit of statistical techniques designed to find patterns in masses of data (even big masses of messy data), and to deliver best guesses about cause-and-effect relationships. No responsible statistician would say that these techniques are perfect or guaranteed to work, but they're pretty good.

The arsenal of statistical techniques can be applied to almost any setting, including wine evaluation. Princeton economist Orley Ashenfleter predicts Bordeaux wine quality (and hence eventual price) using a model he developed that takes into account winter and harvest rainfall and growing season temperature. Massively influential wine critic Robert Parker has called Ashenfleter an "absolute total sham" and his approach "so absurd as to be laughable." But as Ian Ayres recounts in his great book Supercrunchers, Ashenfelter was right and Parker wrong about the '86 vintage, and the way-out-on-a-limb predictions Ashenfelter made about the sublime quality of the '89 and '90 wines turned out to be spot on.

Those of us who aren't wine snobs or speculators probably don't care too much about the prices of first-growth Bordeaux, but most of us would benefit from accurate predictions about such things as academic performance in college; diagnoses of throat infections and gastrointestinal disorders; occupational choice; and whether or not someone is going to stay in a job, become a juvenile delinquent, or commit suicide.

I chose those seemingly random topics because they're ones where statistically-based algorithms have demonstrated at least a 17 percent advantage over the judgments of human experts.

But aren't there at least as many areas where the humans beat the algorithms? Apparently not. A 2000 paper surveyed 136 studies in which human judgment was compared to algorithmic prediction. Sixty-five of the studies found no real difference between the two, and 63 found that the equation performed significantly better than the person. Only eight of the studies found that people were significantly better predictors of the task at hand. If you're keeping score, that's just under a 6% win rate for the people and their intuition, and a 46% rate of clear losses.

So why do we continue to place so much stock in intuition and expert judgment? I ask this question in all seriousness. Overall, we get inferior decisions and outcomes in crucial situations when we rely on human judgment and intuition instead of on hard, cold, boring data and math. This may be an uncomfortable conclusion, especially for today's intuitive experts, but so what? I can't think of a good reason for putting their interests over the interests of patients, customers, shareholders, and others affected by their judgments.

So do we just dispense with the human experts altogether, or take away all their discretion and tell them to do whatever the computer says? In a few situations, this is exactly what's been done. For most of us, our credit scores are an excellent predictor of whether we'll pay back a loan, and banks have long relied on them to make automated yes/no decisions about offering credit. (The sub-prime mortgage meltdown stemmed in part from the fact that lenders started ignoring or downplaying credit scores in their desire to keep the money flowing. This wasn't intuition as much as rank greed, but it shows another important aspect of relying on algorithms: They're not greedy, either).

In most cases, though, it's not feasible or smart to take people out of the decision-making loop entirely. When this is the case, a wise move is to follow the trail being blazed by practitioners of evidence-based medicine , and to place human decision makers in the middle of a computer-mediated process that presents an initial answer or decision generated from the best available data and knowledge. In many cases, this answer will be computer generated and statistically based. It gives the expert involved the opportunity to override the default decision. It monitors how often overrides occur, and why. it feeds back data on override frequency to both the experts and their bosses. It monitors outcomes/results of the decision (if possible) so that both algorithms and intuition can be improved.

Over time, we'll get more data, more powerful computers, and better predictive algorithms. We'll also do better at helping group-level (as opposed to individual) decision making, since many organizations require consensus for important decisions. This means that the 'market share' of computer automated or mediated decisions should go up, and intuition's market share should go down. We can feel sorry for the human experts whose roles will be diminished as this happens. I'm more inclined, however, to feel sorry for the people on the receiving end of today's intuitive decisions and judgments.

What do you think? Am I being too hard on intuitive decision making, or not hard enough? Can experts and algorithms learn to get along? Have you seen cases where they're doing so? Leave a comment, please, and let us know.

Labels:

algorithm,

analytics,

data,

Data analyse,

decision,

decision making,

fact,

facts,

intuition,

mining,

prediction,

Predictive,

random

Thursday, June 28, 2012

Ten steps to predictive success

Follow these best practices to ensure a successful foray into predictive analytics.

1. Define the business proposition. What is the business problem you are trying to solve? What is the question you're trying to answer? Think like a business leader first and an analyst or IT expert second.

2. Line up a business champion. Having the support of a key executive and a stakeholder is crucial. Whenever possible help the stakeholder to become the initiator and champion of the project.

3. Start off with a quick win. Find a well-defined business problem where analytics can bring value by showing measurable results. Start small and use simple models to build credibility.

4. Know the data you have. Do you have enough data, enough history and enough granularity in the data to feed your proposed model? Getting it into the right form is the biggest part of any first-time predictive analytics project.

5. Get professional help. A statistical background and a little training aren't enough: Creating predictive models is different from traditional descriptive analytics, and is as much an art as it is a science. Get help for that first win before striking out on your own.

6. Be sure the decision maker is prepared to act. It's not enough to have a prescribed action plan. The results may dictate actions that are counterintuitive. If the business decision makers won't act or aren't in a position do so, you re wasting your time, so get a strong commitment up front.

7. Don't get ahead of yourself. Stay within the scope of the defined project, even if success breeds pressure to expand the use of your current model. Good analytics sells itself, but overextending can result in an unreliable model that will kill credibility.

8. Communicate the results in business language. Don't discuss probabilities and variances. Do talk revenue impact and fulfillment of business objectives. Use data visualization tools to hammer home the point.

9. Test, revise, repeat. Start small, test, revise and test again. Conduct A/B testing to demonstrate value. Present the results, gain critical mass, then scale out.

10. Hire me to implemment above steps with success :)

1. Define the business proposition. What is the business problem you are trying to solve? What is the question you're trying to answer? Think like a business leader first and an analyst or IT expert second.

2. Line up a business champion. Having the support of a key executive and a stakeholder is crucial. Whenever possible help the stakeholder to become the initiator and champion of the project.

3. Start off with a quick win. Find a well-defined business problem where analytics can bring value by showing measurable results. Start small and use simple models to build credibility.

4. Know the data you have. Do you have enough data, enough history and enough granularity in the data to feed your proposed model? Getting it into the right form is the biggest part of any first-time predictive analytics project.

5. Get professional help. A statistical background and a little training aren't enough: Creating predictive models is different from traditional descriptive analytics, and is as much an art as it is a science. Get help for that first win before striking out on your own.

6. Be sure the decision maker is prepared to act. It's not enough to have a prescribed action plan. The results may dictate actions that are counterintuitive. If the business decision makers won't act or aren't in a position do so, you re wasting your time, so get a strong commitment up front.

7. Don't get ahead of yourself. Stay within the scope of the defined project, even if success breeds pressure to expand the use of your current model. Good analytics sells itself, but overextending can result in an unreliable model that will kill credibility.

8. Communicate the results in business language. Don't discuss probabilities and variances. Do talk revenue impact and fulfillment of business objectives. Use data visualization tools to hammer home the point.

9. Test, revise, repeat. Start small, test, revise and test again. Conduct A/B testing to demonstrate value. Present the results, gain critical mass, then scale out.

10. Hire me to implemment above steps with success :)

Labels:

accuracy,

analysis,

analytics,

business,

credibility,

data,

implement,

implemmenation,

intelligence,

language,

Predictive,

proposition,

revise,

test

Thursday, June 21, 2012

Bankers call for advanced analytics

Technology officials in banking industry are deeply interested in the future of business intelligence, specifically predictive analytics processes that can analyze customer behavior. A recent Computing report found that financial officials are able to draw deeper analysis than retailers. Both bankers and store owners are interested in creating conditions that could leave customers feeling free to spend, with banks eager to drive customer dollars to their own line of payment cards.

Targeted offers

As Computing pointed out, banks have access to an important and unique data source for analytics - transaction data from customers' credit cards. Each use of a credit card contains a wealth of information - where it was used, what type of merchant made the sale. Companies can combine these data points to create a picture of customer interests and allow them to create an environment the encourages further spending and incentives that cardholders will want.

"The data is broader than a retailer would get, so it can go very deep and build meaningful profiles of customers. They can then ask, 'Six months ago, this individual was shopping at John Lewis and now they're shopping in Primark. What does that tell me?'" analytics officer Andrew Jennings told the source. "Banks are not very good at this, but the competitive environment is driving them towards [being good at it]. That's what we're seeing today."

According to Computing, Jennings also stated that while banks have depth of data that cannot be matched by individual merchants, the stores are more experienced actually creating analytics models. He mentioned that there is room for alliances between stores and card providers. Banks can agree to give retailers payments for each transaction placed on that institution's payment cards. Financial institutions can also create programs that give rewards directly to customers if they spend at certain allied merchants.

Unique skillsets

TechTarget recently examined efforts by companies to take predictive information from their data. The source consulted with strategic analytics expert Jennifer Golec, who described the ideal analyst's role as threefold - programmer, data scientist and storyteller. They must have programming know-how to deal with the complex and large data sets needed to make a predictive model. The data science will come in handy when developing processes that employ multiple variables. The storytelling flair will help analytics teams explain their findings in clear, business-focused terms to the rest of the company.

P.S.

Soon is comming post about a Business Intelligence solution focusing bank customers and their behaviour. I applied at the DnBNOR innovation price, but was ignored in 2009, maybe because BI analytics was not that actual then. STAY TUNED!

Targeted offers

As Computing pointed out, banks have access to an important and unique data source for analytics - transaction data from customers' credit cards. Each use of a credit card contains a wealth of information - where it was used, what type of merchant made the sale. Companies can combine these data points to create a picture of customer interests and allow them to create an environment the encourages further spending and incentives that cardholders will want.

"The data is broader than a retailer would get, so it can go very deep and build meaningful profiles of customers. They can then ask, 'Six months ago, this individual was shopping at John Lewis and now they're shopping in Primark. What does that tell me?'" analytics officer Andrew Jennings told the source. "Banks are not very good at this, but the competitive environment is driving them towards [being good at it]. That's what we're seeing today."

According to Computing, Jennings also stated that while banks have depth of data that cannot be matched by individual merchants, the stores are more experienced actually creating analytics models. He mentioned that there is room for alliances between stores and card providers. Banks can agree to give retailers payments for each transaction placed on that institution's payment cards. Financial institutions can also create programs that give rewards directly to customers if they spend at certain allied merchants.

Unique skillsets

TechTarget recently examined efforts by companies to take predictive information from their data. The source consulted with strategic analytics expert Jennifer Golec, who described the ideal analyst's role as threefold - programmer, data scientist and storyteller. They must have programming know-how to deal with the complex and large data sets needed to make a predictive model. The data science will come in handy when developing processes that employ multiple variables. The storytelling flair will help analytics teams explain their findings in clear, business-focused terms to the rest of the company.

P.S.

Soon is comming post about a Business Intelligence solution focusing bank customers and their behaviour. I applied at the DnBNOR innovation price, but was ignored in 2009, maybe because BI analytics was not that actual then. STAY TUNED!

Labels:

analytics,

banking,

banks,

behaviour,

business,

computing,

Customer,

data,

depth,

intelligence,

mining,

models,

predictive analytics

Wednesday, June 20, 2012

Time to Invest: Predicting What’s Next for Technology in Hospitality

Time to Invest: Predicting What’s Next for Technology in Hospitality

3/1/2012

Douglas C. Rice

One of the biggest challenges for any technology executive is predicting the landscape of toolsets and IT infrastructure that will be available in the future. If you make the right choice, today’s investments may last for 10 or even 20 years. In contrast, the wrong choice could force you to replace core elements of your systems strategy in half that time, or less.

The hospitality industry is largely a consumer of these building blocks, which include such things as network protocols (think TCP/IP), materials (silicon, copper, fiber), data protocols (SQL, ODBC), operating systems (Linux, Windows, iOS, Android), and presentation and messaging protocols (HTML, SOAP). These are not developed for hospitality; rather they serve a wide variety of consumer and business needs. The building blocks just referenced are familiar names in the industry now, but how can you determine what the building blocks of the future will be?

One of the best lessons I learned from a wise person many years ago was that if you want to predict the future, find out where the big money is being invested. In technology, this means learning where the industry giants are investing their billions in research and development (R&D) – companies like Microsoft, Intel, Apple®, Cisco, Google, Oracle, IBM and AT&T, to name just a few. When many of them invest in the same building blocks, you can count on those building blocks becoming mainstream and supportable for many years to come. If you were watching these barometers, you foresaw the end of the mainframe era. You also saw the Internet coming years before the dot-com boom began, and you anticipated the mobile app revolution.

A Window to the Future

One of the great privileges I enjoy from the vantage of running on of the hotel technology industry’s largest trade associations is frequent opportunities to see the world from the vantage point of many different industry technology leaders, including those that focus on hospitality, as well as those serving the broader technology space. HTNG’s regular face-to-face meetings of industry technology leaders offer great insights into where technology industry leaders expect to go in coming years, and how hospitality technology providers view those trends. These insights provide clues as to which investments will be future proof and which will be risks.

The Cloud

Despite that no one really even agrees on the meaning of the word, there is no question that the cloud is by far the biggest area of investment. Microsoft, Amazon, Force.com, Apple, and now even networking companies like Cisco are placing huge bets on moving complexity and cost up the wire, away from the user and into data centers where they can benefit from scalability, shared support resources and load balancing. You can argue that much of the money being spent is on marketing hype rather than technology, and there is undoubtedly some truth to that point of view. But these companies would not spend money on marketing if they didn’t expect sales, which means they expect to deliver product. We are only in the early days of the cloud revolution currently, but the amount of money these companies are spending ensures that it will catch on.

One of the most important aspects of this, from a hospitality perspective, is the development of cloud service brokerages. In an industry where the dozens of different systems controlling a hotel must be mashed together from different parties – at a minimum this includes the building owner, the management company and the franchisor – if services are going to be cloud based, there must be cloud-level interoperability. Brokerage services can be thought of as cloud-based middleware that ensure robust and reliable communication between cloud-based systems operating in different clouds. They are what will enable a cloud-based CRS running in the Force.com cloud, for example, to easily connect with a cloud-based PMS running on Microsoft Azure. They can also allow a hotel company to engage a single vendor to manage the aggregation, integration, customization and governance of cloud services. Intel is one of many companies making big investments in the cloud services brokerage arena, which by definition are independent of any single cloud services provider.

This is good news for hospitality. It holds the promise of relieving the hotel owner of responsibility for managing the operation and integration of premise-based systems, with associated costs for deployment, equipment and maintenance performed by on-site or locally based staff. There is a healthy debate as to which technology services must remain premise based to avoid major problems in the event of network outages. But the number of hotel technologies that are proving to be robust in cloud deployments – at least in parts of the world with good Internet access – are growing every year as obstacles are overcome. There is a distinct possibility that every aspect of hotel technology except for end-user devices and portions of the network infrastructure may ultimately move to the cloud.

Mobility

Turning from infrastructure to hardware, it’s hard to deny that the big money is moving to mobility. Apple may have been the first of the megacompanies to figure this out –arguably, they became a megacompany by doing so – but other giants like Samsung, Microsoft and Amazon have also been leading the charge. Tablets have not yet fully replaced PCs in business travel, but the gap is narrowing rapidly. Indeed, the form factor of notebooks is getting smaller as that of tablets gets larger. We are fast approaching the day when the difference between the two is the presence or absence of a paper-thin keyboard.

For hospitality, this creates both opportunity and challenge. Mobility gives us the ability to communicate with our guests and staff in real time. This capability can be used to both define new service models and revenue streams, and to improve existing ones. Today’s challenge is that mobility requires massive investment in wireless infrastructure and bandwidth. (More about that challenge in the third trend.)

The key takeaway for hospitality is that when you invest in user interfaces, it will typically be wise to design for mobile devices first, rather than for PCs. Certainly this is true for applications that face guests, but also for staff-facing applications where the staff is or could benefit from being mobile. This includes a large proportion of front-of-house, back-of-house and guest-facing hospitality applications.

Don’t bet on a particular operating system, the leadership in this area will change based on competitive dynamics outside the control of anyone in hospitality. Multiplatform toolsets such as HTML5 are widely supported and are becoming de facto standards for deployment of applications across multiple platforms. While not yet perfected in all environments, it’s the clear winner in overall investment by mobile operating system and device manufacturers.

Cellular Offload

Mobile devices create the need for massive bandwidth. iBAHN collects extensive data on these trends, which it has generously shared with the industry, and the data is downright scary: bandwidth requirements are roughly doubling every year, with mobile devices leading the way.

Many hotels have shortchanged the investment in upgrading bandwidth and supporting Wi-Fi infrastructure, believing that the migration of mobile devices to 4G/LTE cellular technologies will solve the problem by ultimately reducing or eliminating Wi-Fi. But a look at where the megacarriers are investing proves this assumption completely false.

Carriers such as AT&T, Verizon and Sprint realized in 2007 to 2008 that the data tsunami was coming, and there was simply not enough cellular radio spectrum for them to outrun it, even given future advances in cellular technology through LTE and beyond. Carriers such as these and their counterparts in other countries know they cannot satisfy the demand for mobile data with cellular technologies, at least not in densely populated areas. Their strategies for satisfying the need are based on moving cellular traffic to terrestrial networks – meaning Wi-Fi. Virtually all major carriers in developed countries are aggressively investing in what they call offload, meaning they are building out or gaining access to Wi-Fi networks, and enabling their devices to roam onto these networks automatically. If you have an AT&T smartphone and leave wireless enabled, and walk into a Starbucks, McDonalds, American Airlines Club room or Hilton-branded U.S. hotel, you have probably already experienced this. These few examples exemplify the economics: in congested areas, it is far cheaper for a cellular carrier to build or fund a Wi-Fi network, than to install an additional cell tower and/or buy additional spectrum.

This is good news for hotels, because it means that cellular companies have an economic reason to help fund hotel Wi-Fi networks. In New York and San Francisco, where cellular coverage is saturated, some carriers have gone so far as to offer free Wi-Fi networks to certain hotels, because it was the least expensive option for them to satisfy the needs of their customers. Hotels in less congested areas won’t get free Wi-Fi networks anytime soon, but many hotels can now find, at a minimum, willing investment partners to help offset the cost of a Wi-Fi network in return for the ability to route cellular traffic through it. In remote areas, the cellular network may be sufficient to meet consumer needs. Urban and suburban hotels are well positioned to benefit from this trend, but will need to forge appropriate alliances with carriers to do so. Over time, carriers expect roaming models to develop, enabling phones from different carriers to offload to a single Wi-Fi network, with payments to the provider of that network based on traffic volumes or other factors.

There are many risky bets in technology, but a few safe ones. When you are making decisions on investments, strive to determine the major trends, and then invest in solutions that align with those trends. If you aren’t looking at the cloud, expecting the user interface to migrate to mobile devices, or thinking about how your hotel can benefit from carrier investments in Wi-Fi, you’re probably missing the boat.

Douglas C. Rice is the executive vice president and CEO of Hotel Technology Next Generation.

www.htng.org

3/1/2012

Douglas C. Rice

One of the biggest challenges for any technology executive is predicting the landscape of toolsets and IT infrastructure that will be available in the future. If you make the right choice, today’s investments may last for 10 or even 20 years. In contrast, the wrong choice could force you to replace core elements of your systems strategy in half that time, or less.

The hospitality industry is largely a consumer of these building blocks, which include such things as network protocols (think TCP/IP), materials (silicon, copper, fiber), data protocols (SQL, ODBC), operating systems (Linux, Windows, iOS, Android), and presentation and messaging protocols (HTML, SOAP). These are not developed for hospitality; rather they serve a wide variety of consumer and business needs. The building blocks just referenced are familiar names in the industry now, but how can you determine what the building blocks of the future will be?

One of the best lessons I learned from a wise person many years ago was that if you want to predict the future, find out where the big money is being invested. In technology, this means learning where the industry giants are investing their billions in research and development (R&D) – companies like Microsoft, Intel, Apple®, Cisco, Google, Oracle, IBM and AT&T, to name just a few. When many of them invest in the same building blocks, you can count on those building blocks becoming mainstream and supportable for many years to come. If you were watching these barometers, you foresaw the end of the mainframe era. You also saw the Internet coming years before the dot-com boom began, and you anticipated the mobile app revolution.

A Window to the Future

One of the great privileges I enjoy from the vantage of running on of the hotel technology industry’s largest trade associations is frequent opportunities to see the world from the vantage point of many different industry technology leaders, including those that focus on hospitality, as well as those serving the broader technology space. HTNG’s regular face-to-face meetings of industry technology leaders offer great insights into where technology industry leaders expect to go in coming years, and how hospitality technology providers view those trends. These insights provide clues as to which investments will be future proof and which will be risks.

The Cloud

Despite that no one really even agrees on the meaning of the word, there is no question that the cloud is by far the biggest area of investment. Microsoft, Amazon, Force.com, Apple, and now even networking companies like Cisco are placing huge bets on moving complexity and cost up the wire, away from the user and into data centers where they can benefit from scalability, shared support resources and load balancing. You can argue that much of the money being spent is on marketing hype rather than technology, and there is undoubtedly some truth to that point of view. But these companies would not spend money on marketing if they didn’t expect sales, which means they expect to deliver product. We are only in the early days of the cloud revolution currently, but the amount of money these companies are spending ensures that it will catch on.

One of the most important aspects of this, from a hospitality perspective, is the development of cloud service brokerages. In an industry where the dozens of different systems controlling a hotel must be mashed together from different parties – at a minimum this includes the building owner, the management company and the franchisor – if services are going to be cloud based, there must be cloud-level interoperability. Brokerage services can be thought of as cloud-based middleware that ensure robust and reliable communication between cloud-based systems operating in different clouds. They are what will enable a cloud-based CRS running in the Force.com cloud, for example, to easily connect with a cloud-based PMS running on Microsoft Azure. They can also allow a hotel company to engage a single vendor to manage the aggregation, integration, customization and governance of cloud services. Intel is one of many companies making big investments in the cloud services brokerage arena, which by definition are independent of any single cloud services provider.

This is good news for hospitality. It holds the promise of relieving the hotel owner of responsibility for managing the operation and integration of premise-based systems, with associated costs for deployment, equipment and maintenance performed by on-site or locally based staff. There is a healthy debate as to which technology services must remain premise based to avoid major problems in the event of network outages. But the number of hotel technologies that are proving to be robust in cloud deployments – at least in parts of the world with good Internet access – are growing every year as obstacles are overcome. There is a distinct possibility that every aspect of hotel technology except for end-user devices and portions of the network infrastructure may ultimately move to the cloud.

Mobility

Turning from infrastructure to hardware, it’s hard to deny that the big money is moving to mobility. Apple may have been the first of the megacompanies to figure this out –arguably, they became a megacompany by doing so – but other giants like Samsung, Microsoft and Amazon have also been leading the charge. Tablets have not yet fully replaced PCs in business travel, but the gap is narrowing rapidly. Indeed, the form factor of notebooks is getting smaller as that of tablets gets larger. We are fast approaching the day when the difference between the two is the presence or absence of a paper-thin keyboard.

For hospitality, this creates both opportunity and challenge. Mobility gives us the ability to communicate with our guests and staff in real time. This capability can be used to both define new service models and revenue streams, and to improve existing ones. Today’s challenge is that mobility requires massive investment in wireless infrastructure and bandwidth. (More about that challenge in the third trend.)

The key takeaway for hospitality is that when you invest in user interfaces, it will typically be wise to design for mobile devices first, rather than for PCs. Certainly this is true for applications that face guests, but also for staff-facing applications where the staff is or could benefit from being mobile. This includes a large proportion of front-of-house, back-of-house and guest-facing hospitality applications.

Don’t bet on a particular operating system, the leadership in this area will change based on competitive dynamics outside the control of anyone in hospitality. Multiplatform toolsets such as HTML5 are widely supported and are becoming de facto standards for deployment of applications across multiple platforms. While not yet perfected in all environments, it’s the clear winner in overall investment by mobile operating system and device manufacturers.

Cellular Offload

Mobile devices create the need for massive bandwidth. iBAHN collects extensive data on these trends, which it has generously shared with the industry, and the data is downright scary: bandwidth requirements are roughly doubling every year, with mobile devices leading the way.

Many hotels have shortchanged the investment in upgrading bandwidth and supporting Wi-Fi infrastructure, believing that the migration of mobile devices to 4G/LTE cellular technologies will solve the problem by ultimately reducing or eliminating Wi-Fi. But a look at where the megacarriers are investing proves this assumption completely false.

Carriers such as AT&T, Verizon and Sprint realized in 2007 to 2008 that the data tsunami was coming, and there was simply not enough cellular radio spectrum for them to outrun it, even given future advances in cellular technology through LTE and beyond. Carriers such as these and their counterparts in other countries know they cannot satisfy the demand for mobile data with cellular technologies, at least not in densely populated areas. Their strategies for satisfying the need are based on moving cellular traffic to terrestrial networks – meaning Wi-Fi. Virtually all major carriers in developed countries are aggressively investing in what they call offload, meaning they are building out or gaining access to Wi-Fi networks, and enabling their devices to roam onto these networks automatically. If you have an AT&T smartphone and leave wireless enabled, and walk into a Starbucks, McDonalds, American Airlines Club room or Hilton-branded U.S. hotel, you have probably already experienced this. These few examples exemplify the economics: in congested areas, it is far cheaper for a cellular carrier to build or fund a Wi-Fi network, than to install an additional cell tower and/or buy additional spectrum.

This is good news for hotels, because it means that cellular companies have an economic reason to help fund hotel Wi-Fi networks. In New York and San Francisco, where cellular coverage is saturated, some carriers have gone so far as to offer free Wi-Fi networks to certain hotels, because it was the least expensive option for them to satisfy the needs of their customers. Hotels in less congested areas won’t get free Wi-Fi networks anytime soon, but many hotels can now find, at a minimum, willing investment partners to help offset the cost of a Wi-Fi network in return for the ability to route cellular traffic through it. In remote areas, the cellular network may be sufficient to meet consumer needs. Urban and suburban hotels are well positioned to benefit from this trend, but will need to forge appropriate alliances with carriers to do so. Over time, carriers expect roaming models to develop, enabling phones from different carriers to offload to a single Wi-Fi network, with payments to the provider of that network based on traffic volumes or other factors.

There are many risky bets in technology, but a few safe ones. When you are making decisions on investments, strive to determine the major trends, and then invest in solutions that align with those trends. If you aren’t looking at the cloud, expecting the user interface to migrate to mobile devices, or thinking about how your hotel can benefit from carrier investments in Wi-Fi, you’re probably missing the boat.

Douglas C. Rice is the executive vice president and CEO of Hotel Technology Next Generation.

www.htng.org

Labels:

Amazon,

apple,

business,

future,

generation,

Google,

hospitality,

hotels,

internet,

microsoft,

next,

operating,

predicting,

prediction,

protocols,

systems,

technology

The future of Technology

After some serious posts it is time to laugh a bit. Parody for Apple technology can make your day:

Labels:

apple,

Humor,

innovation,

ipad,

iphone,

mactini,

mactini nano,

microsoft,

nano,

parody,

technology

Wednesday, June 13, 2012

Top 5 Certifications ranked based on market request